That's a lot of stalkerware in the wild. And this exploit is only about two such apps. What's wrong with people that they install this kind of crap on their loved ones smarphones?

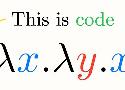

A neat little introduction to an important field in computer science. Lambda calculus is often too little known but it has very important ramifications in several fields.

More feedback about uv use in the wild. This is getting really close to becoming the de facto solution for new projects.

Early days but this looks like an interesting solution to democratize the inference of large models.

This is a good satire which shows well the excuses people use to not test first.

I like this paper, it's well balanced. The conclusion says is all: if you're not actively working on reducing the harms then you might be doing something unethical. It's not just a toy to play with, you have to think about the impacts and actively reduce them.

I'm not sure I would phrase it like this but there's quite some truth to it. It's important to figure out what we take for granted and to open the black boxes. This is where one finds mastery.

Interesting research, looking forward to the follow ups to see how it evolves over time. For sure the number of issues is way to high still to make trustworthy systems around search and news.

This might be accidental but this highlights the lack of transparency on how those models are produced. It also means we should get ready for future generation of such models to turn into very subtle propaganda machines. Indeed even if for now it's accidental I doubt it'll be the case much longer.

A good reminder that everything they buy they turn it into a surveillance system indeed... This time under the pretense of security.

People really need to be careful about the short term productivity boost... If it kills maintainability in the process you're trading that short term productivity for a crashing long term productivity.

Looks like an interesting DSL to write high performance Python code.

This is definitely a problem. It's doomed to influence how tech are chosen on software projects.

The security implications of using LLMs are real. With the high complexity and low explainability of such models it opens the door to hiding attacks in plain sight.

This is obviously all good news on the Wayland front. Took time to get there, got lots of justified (and even more unjustified) complaints, but now things are looking bright.

Due to NetworkManager you forgot how to setup an interface and the networking stack manually? Here is a good summary of the important steps.

Some powerful bullies want to make the life of editors impossible. Looks like the foundation has the right tools in store to protect those contributors.

This is an interesting way to frame the problem. We can't rely too much on LLMs for computer science problems without loosing important skills and hindering learning. This is to be kept in mind.

Surprising idea... I guess I'll mull this one over and maybe try. This is not a small change of habit though.

Alright, this piece is full of vitriol... And I like it. The CES has clearly become a mirror of the absurdities our industry is going through. The vision proposed by a good chunk of the companies is not appealing and lazy.